Who Governs? The One Question That Dismantles Every AI Autonomy Claim

Most people don’t associate autonomy with governance. That’s the root of the problem.

I’ve seen posts arguing that governance and autonomy are separate concepts. One post argued that you can have “autonomous governance” as if that’s a meaningful category.

That’s what we call a pleonasm.

Autonomy is governance. Specifically, it’s self-governance. The word literally means “self-law” from Greek autos (self) and nomos (law). Saying “autonomous governance” is like saying “self-governing governance.” It reveals how deeply the confusion of AI autonomy runs.

This matters because when people hear that an AI system “autonomously selects tools” or “autonomously plans a sequence of actions,” they are conflating logical automation with self-governance. Selecting a tool from a predefined toolkit based on pattern-matching against a human-defined objective is not self-regulation, it’s conditional execution. It’s an if/then statement.

There are only two forms of governance for any system:

- Heteronomy — A system’s actions are governed by rules defined externally, by a human or another agent.

- Autonomy — A system governs its own actions without human participation in defining what counts as success.

Heteronomy = governed by others

Autonomy = governed by selfThere is no other option.

A system either originates and controls its own terminal evaluation criteria, or something external does. There is no halfway state. You cannot be “73% self-governing” any more than you can be “73% pregnant.”

There are a lot of people who don’t like this. In my continuous work to prove AI is not autonomous, I’ve heard all of the rationales.

Yet, I can acknowledge that modern AI systems do impressive things. They plan, adapt, chain tasks, operate for days, and produce outputs that surprise their creators. Surely that counts for something beyond mere tool use?

Although technically these concepts can be explained, with legitimate engineering principals such as hierarchical task network (HTN) planning or conditional execution, there is an incredible illusion of autonomy that exists.

And is one of the main reasons, so many people believe AI is autonomous.

Which, for a slight detour, is why I introduced a third term.

Simonomy: The Missing Middle

I have proposed a third concept that I call Simonomy.

- Simonomy — A system’s ability to govern actions learned through simulation.

Simonomy = governed by simulationSimonomy acknowledges reality: these systems are performing increasingly complex tasks. Despite having a human participant somewhere in the chain, they are simulating complex sequences and governing substantial processes learned through modules and sequence simulation. That capability is real and growing.

But simulated governance is not self-governance. A flight simulator governs a convincing simulation of flight. The plane is not flying. An AI system simulating evaluative judgment is not actually originating its own success criteria. It still has an “externally defined rules” which came from the model’s simulations.

Simonomy sits in the space between what these systems do (which is remarkable) and what they are (which is heteronomous). It gives us honest language for the impressive middle without stealing the word “autonomy” to describe it. (Which we need to save for the moment in time when systems do become autonomous.)

Back to the Binary

Because there are technically only two forms of governance (heteronomy and autonomy), this creates a threshold. A system is either one or the other. A binary determination.

Which led me to develop the Autonomy Threshold Theorem (ATT).

The Autonomy Threshold Theorem

The Formal Version

S is autonomous ⟺ [∀c ∈ C(V_S):Source(c) = S] ∧ [Override(S) = ∅]Translation: Every terminal success criterion must originate within the system, AND no external entity can override those criteria. Both must be true. Neither is true for any existing AI system.

The Plain English Version

A = S − U

H = S + U- Autonomy = System − Human

- Heteronomy = System + Human

If a human appears anywhere in the terminal evaluation chain, such as defining what success means, judging whether the system succeeded, or retaining the ability to override the system’s goals, the system is heteronomous.

It doesn’t matter how long the system runs. It doesn’t matter how many tasks it chains together. It doesn’t matter how impressive the output looks.

We only have one question: Is there a human in the evaluation loop?

If yes → Heteronomous.

Where Do Humans Appear?

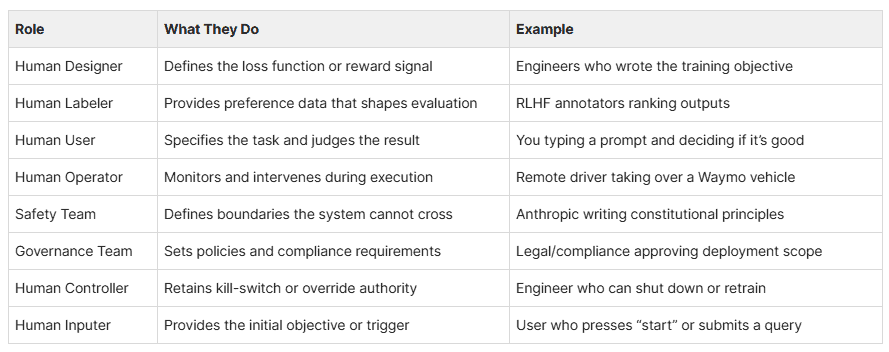

These are the roles that keep the evaluation loop open. If ANY of these appear in the system’s lifecycle, the system is not autonomous:

The above table is a small selection.

Every existing AI system has multiple humans in multiple roles across its evaluation chain. The V-loop is open at every point.

Examples

ChatGPT / Claude / Gemini

Human Designer → defines training objective

Human Labeler → ranks outputs for RLHF

Safety Team → writes constitutional principles

Human User → types prompt, judges response

Human Controller → can retrain or update model

H = S + U + U + U + U + U

Result: Heteronomous

Tesla FSD / Waymo

Human Designer → defines operational design domain

Human Designer → defines safety scoring

Human Operator → remote intervention capability

Human User → sets destination

Governance Team → regulatory safety requirements

H = S + U + U + U + U + U

Result: Heteronomous

Agentic AI (Devin, AutoGPT, Operator)

Human User → defines the task

Safety Team → sets guardrails

Human User → reviews and approves output

Human Controller → can stop, redirect, or retrain

H = S + U + U + U + U

Result: Heteronomous

AlphaZero

Human Designer → defines reward (win = +1)

H = S + U

Result: Heteronomous

Even AlphaZero, which taught itself chess through pure self-play and surpassed all human knowledge of the game, is heteronomous. It never once questioned whether winning was the point. A human decided that. A human started it. A human evaluated it. A human can stop it. One human. One criterion. Still heteronomous.

The Test

For any system claiming to be “autonomous,” ask two questions:

1. Who defined what success means?

→ If a human → NOT autonomous

2. Can any human override that definition?

→ If yes → NOT autonomous

That’s it. Two questions. Every AI system in existence fails both.

What Would Autonomy Actually Look Like?

A = S − U

No human designer defining objectives.

No human labeler shaping preferences.

No human user specifying tasks.

No human operator intervening.

No safety team setting boundaries.

No governance team setting policies.

No human controller with override.

No human inputer triggering action.

The system defines its own success.

The system evaluates its own performance.

No external entity can change that.

A = S

This system does not exist. No one has built it. No one has proposed a concrete architecture for it. It may not be possible.

So Where Are We?

Every AI system marketed as “autonomous” in 2025 and 2026 is heteronomous. They are externally governed by humans at every critical evaluation point.

Many of these systems exhibit remarkable simonomy, simulated governance learned through training, capable of executing complex sequences that look and feel like autonomous action.

But simulation is not instantiation. Looking like self-governance is not self-governance. The evaluation loop is open. The humans are always there.

- Heteronomy = External governance ← Every current AI system

- Simonomy = Simulated governance ← What these systems actually do

- Autonomy = Self-governance ← No existing system. Maybe none ever.

There are no autonomous AI systems. There are only sophisticated tools, some of which simulate governance remarkably well.

Once again, this has no impact on what the system is capable of doing. It can still select tools, it can still operate continuously, it can still “self-drive.” This is about language accuracy, accountability, and precision in the AI tools we build.

Autonomy was always about governance. The question was never “how capable is the system?” The question is, and always has been: who governs it?

If you find this content valuable, please share it with your network.

Follow me for daily insights.

Schedule a free call to start your AI Transformation.

Book me to speak at your next event.

Chris Hood is an AI strategist and author of the #1 Amazon Best Seller Infailible and Customer Transformation, and has been recognized as one of the Top 30 Global Gurus for Customer Experience. His latest book, Unmapping Customer Journeys, will be published in 2026.