Scaling AI Governance Teams: Six Structures for Managing AI Agents

Every organization deploying AI agents is building a governance function. The question is whether they know it yet.

Some have formalized it. A dedicated team, executive sponsorship, and cross-functional contributors. Others have one person quietly trying to hold things together while the rest of the organization moves fast. A few have nothing at all, and they are discovering what that costs in real time.

There is no single right structure. There are six. And where an organization lands depends on how seriously it takes the relationship between governance and deployment velocity.

The Six Structures of AI Governance

Governance maturity follows a recognizable pattern. Organizations start informally and either stall, formalize, or scale. The structure reveals itself in how decisions get made and who gets to make them.

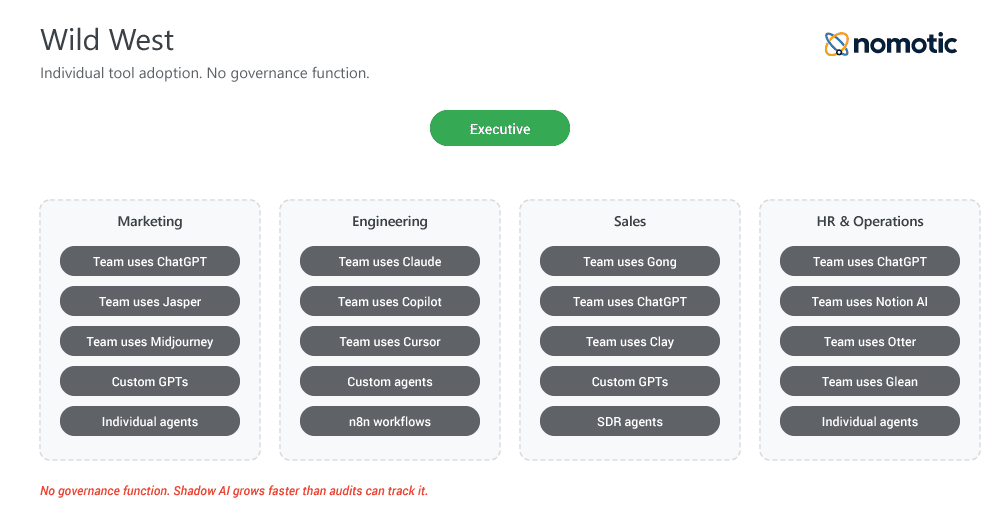

Wild West

AI uses functions like an employee benefit. Anyone can deploy anything. Individual contributors adopt tools independently, teams use different models for the same tasks, and leadership has limited visibility into what is running or what it is doing. This is the most common starting point, and the most dangerous one to stay in. Shadow AI grows in these environments faster than any audit can catch it, and the first real incident is usually how leadership learns the inventory exists.

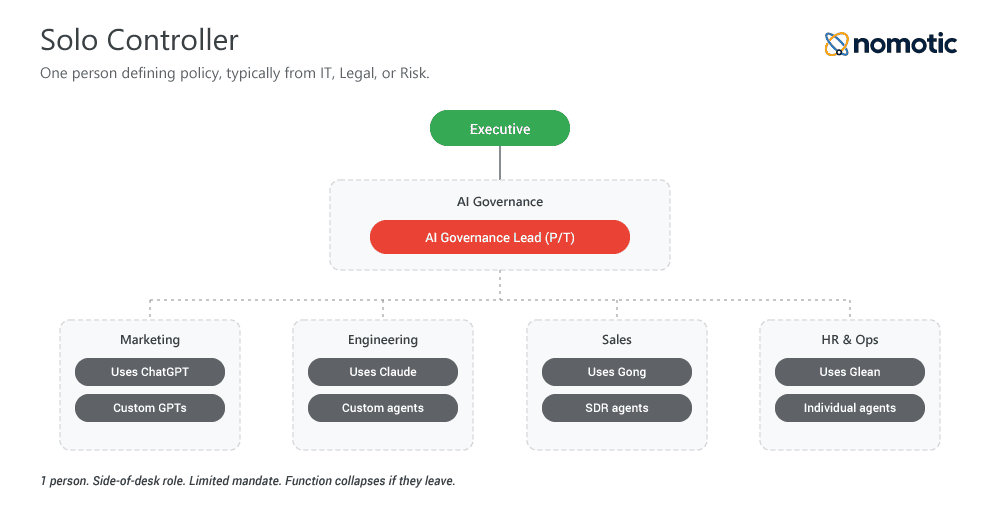

Solo Controller

One person, typically sitting somewhere in IT, legal, or risk, is defining how AI should be governed. They are building policy with a limited mandate and a limited budget. Progress is real. Fragility is high. The moment that person leaves, changes roles, or burns out, the function collapses. Solo Controller is better defined as a stage in AI adoption.

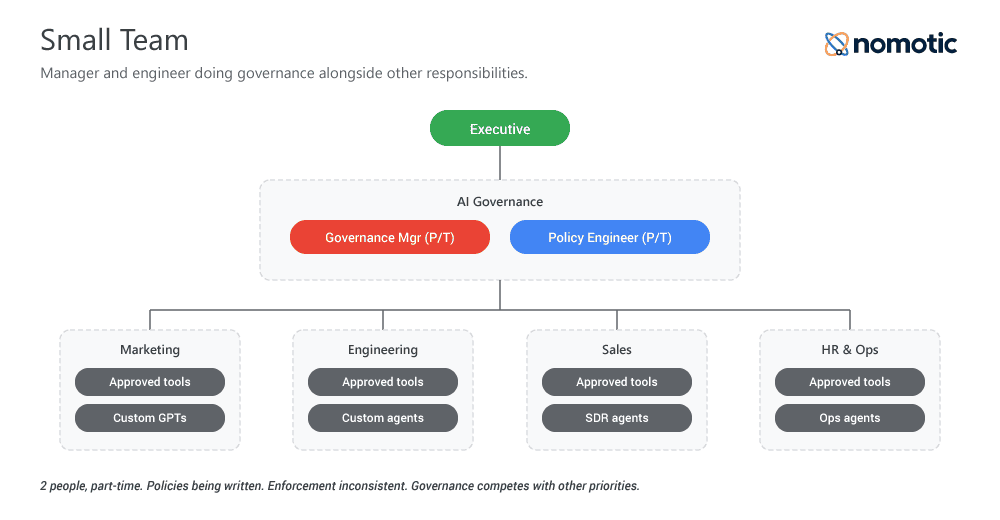

Small Team

A manager and an engineer are doing governance work alongside their other responsibilities. Policies are being written. Some enforcement is happening. Cross-functional collaboration is inconsistent but emerging. The work is real, but the attention is divided, which means governance competes with every other priority on the team’s plate and usually loses.

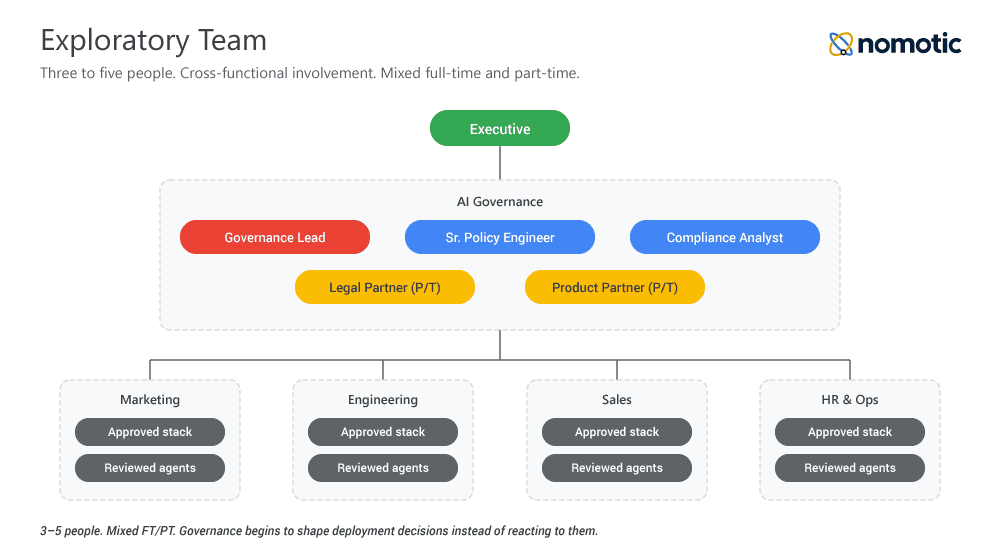

Exploratory Team

Three to five people building governance policies with cross-functional involvement. Some roles are full-time, some are part-time. The organization is starting to treat governance as infrastructure rather than overhead. This is the first structure in which governance begins to shape deployment decisions rather than react to them.

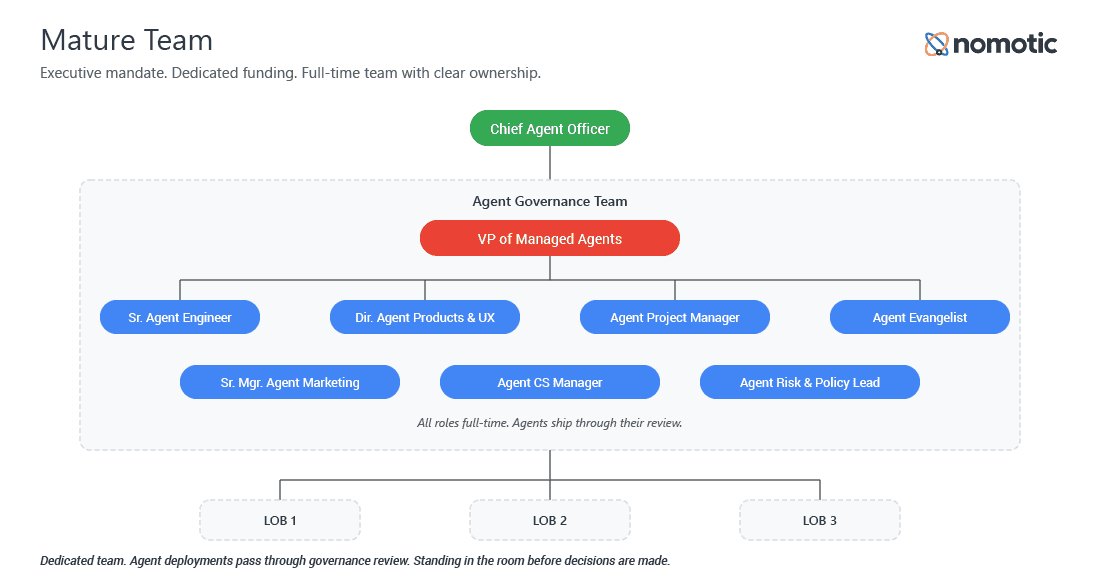

Mature Team

An executive mandate. Dedicated funding. A full-time team with clear ownership and the organizational standing to shape deployment decisions before they happen. Mature Teams own the policy layer, the enforcement layer, and the conversation with leadership about risk. Every agent ships through their review, and that review happens early enough to matter.

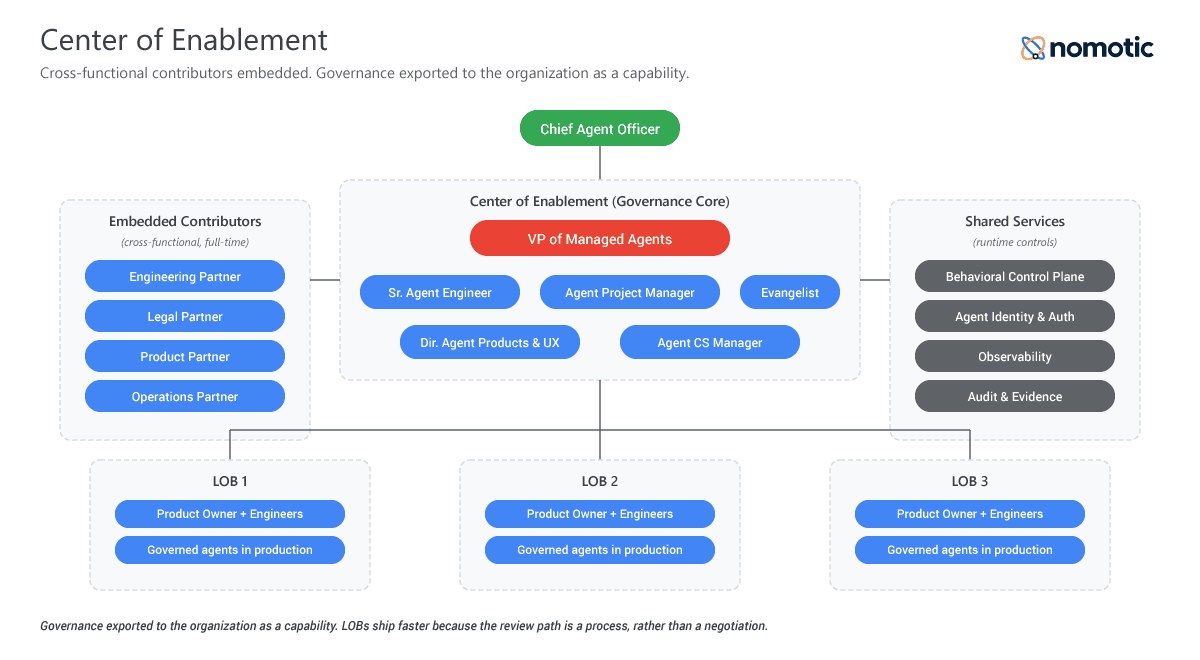

Center of Enablement

A mature team with cross-functional contributors embedded from engineering, legal, product, and operations. All full-time managing the complete AI governance lifecycle. Governance is exported to the rest of the organization as a capability, which distinguishes this structure from others. It enables rather than constrains. Product teams route through governance because it helps them ship.

Roles to Consider

A few roles worth building around. These are human roles. Agent counterparts like Axon, the CAO Agent; Juyn, the VP of Operations Agent; or Devi, the Sr. Engineering Agent,, constitute a separate structural conversation about how agents themselves operate within the organization. The governance function starts with people.

- Chief AI Officer / Chief Agent Officer / Chief Agentic Officer. Executive ownership of the AI or agent portfolio. Managing AI models and managing AI agents are different problems. One is about capability. The other is about behavior, authorization, and accountability at runtime. The title choice reflects which problem the organization is actually solving.

- VP of Managed Agents. Operating lead. Owns the framework, the team, and the decisions that sit at the intersection of engineering and organizational risk. The translation layer between what the agents are doing and what the business is willing to accept.

- Sr. Agent Engineer. Owner of the technical infrastructure, including the runtime controls that keep agents inside their boundaries.

- Director of Agent Products & UX. Owner of the agent-facing surface and what users actually encounter when they interact with an agent.

- Agent Project Manager. Keeps governance work moving and coordinates across contributors from engineering, legal, product, and operations.

- Agent Community Evangelist. Bring the rest of the organization along. Governance fails the moment it becomes something done to teams rather than with them.

- Sr. Manager of Agent Marketing. Owns how the organization communicates about its AI capabilities, internally and externally.

- Agent Customer Success Manager. Owns outcomes for agents deployed in customer-facing contexts.

Cross-functional teams built from these roles are what anchor a Mature Team or Center of Enablement structure. Part-time attention produces part-time results.

Where the Governance Function Should Sit

The reporting structure shapes everything about how a governance function operates.

Governance sitting inside legal optimizes for liability protection. Inside engineering, it optimizes for deployment speed. Inside marketing, it optimizes for brand. None of these is wrong. All of them are incomplete. A governance function captured by a single domain will reflect that domain’s priorities and underweight the others.

The Center of Enablement structure works best as an independent function with direct executive access and cross-functional contributors rather than cross-functional reporting lines. This gives the team organizational breadth without organizational capture. Every domain has a voice. No single domain sets the agenda.

Independence matters most when the decision is hard. When engineering wants to ship, legal wants to block, and product wants to negotiate, the governance function needs the standing to hold the center. That standing comes from reporting structure, executive backing, and a clear mandate that predates the current disagreement. Organizations that try to assemble a standing in the middle of a crisis usually discover the gap too late to close it.

Why Governance Accelerates Deployment

The common assumption is that governance slows things down. The data points the other way.

Research suggests companies with governance infrastructure in place deploy significantly more AI projects to production than those without it. The reason is organizational clarity. When the questions of who authorized this agent, what data it accesses, and what happens when it acts outside its boundary have answers before deployment begins, deployment moves faster.

Without governance, every new agent raises the same unanswered questions, often when a deadline is already slipping. With governance, those questions have standing answers. Teams know what approvals look like, what controls are required, and what counts as an acceptable risk. The path from prototype to production becomes a process rather than a negotiation.

This is the inversion most leadership teams miss. Governance is better understood as the infrastructure of sustained velocity. It is what makes velocity sustainable.

Moving Between Structures

Most organizations will pass through more than one of these structures on the way to where they need to be. The movement is rarely linear. A Small Team can collapse back to a solo controller after a reorg. An Exploratory Team can stall for years without executive sponsorship. A Mature Team can fail to reach the Center of Enablement because it protects its territory rather than exporting its capability.

The transitions that matter most are the ones that change the mandate. Solo Controller to Small Team is a staffing change. Exploratory Team to Mature Team is a mandated change, and it usually requires a specific executive decision rather than organic growth. That decision is the one most organizations delay until an incident forces them to act.

Six structures. One direction. Which structure is your organization actually running, and which one does it think it’s running?

If you find this content valuable, please share it with your network.

Follow me for daily insights.

Book me to speak at your next event.

Chris Hood is an AI strategist and author of the #1 Amazon Best Seller Infailible and Customer Transformation, and has been recognized as one of the Top 30 Global Gurus for Customer Experience. His latest book, Unmapping Customer Journeys, will be published in 2026.