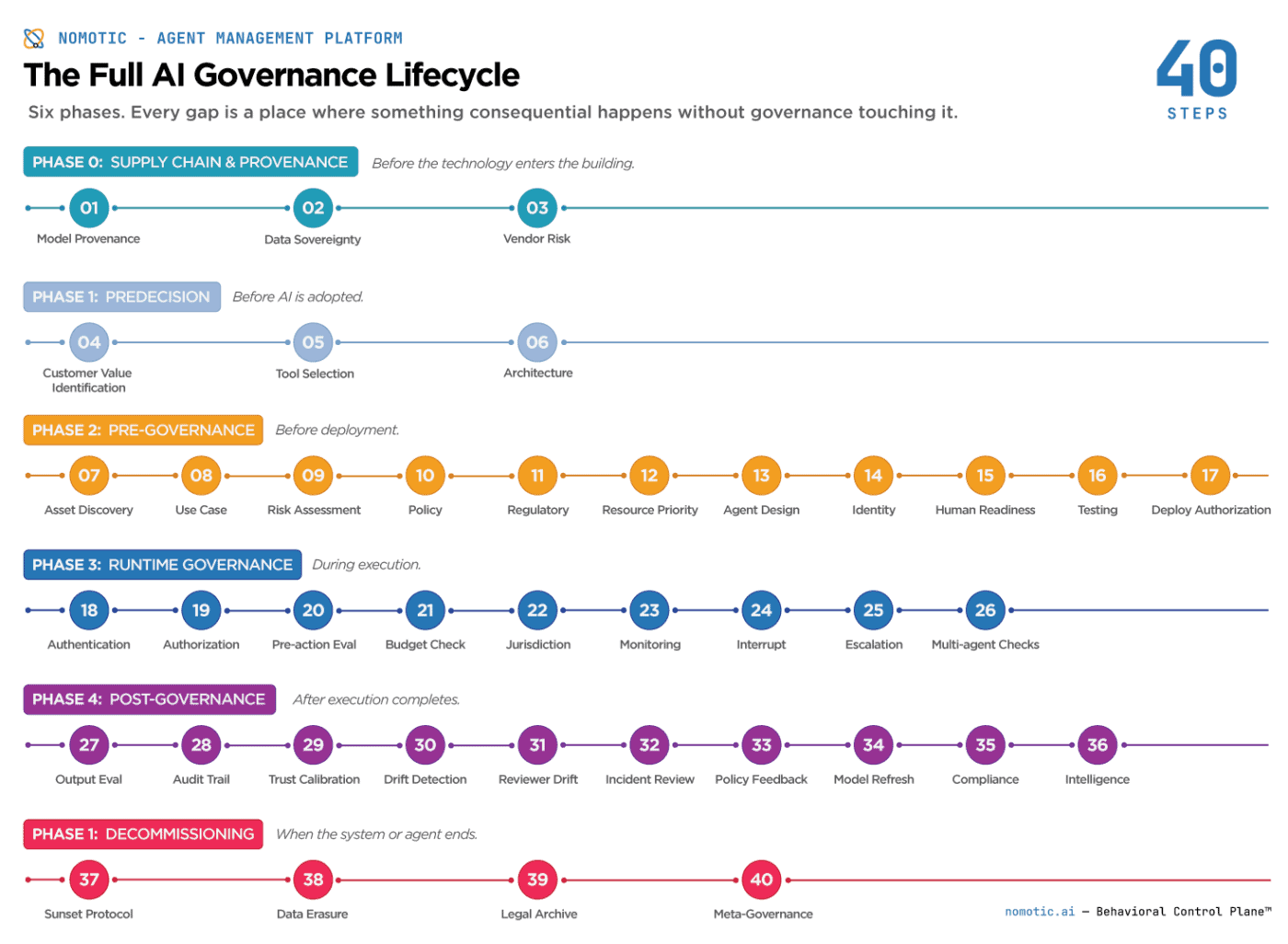

The Full AI Governance Lifecycle in 40 Steps

Ask anyone regarding AI governance about what they do, and they will tell you their piece is the most critical. Of course, it doesn’t help that most comments on social media are now generated by AI.

- “What stands out to me…”

- “The tension I keep seeing…”

- “The layer I’d add…”

- “We address a gap upstream…”

- “We address a gap downstream…”

- “Strong framing, where I’d push…”

- “The question I keep coming back to…”

I’ve yet to have someone not try to position their AI governance platform, framework, or idea as being “something different than what you are talking about.”

Organizations buy point solutions for the pieces that generated the most anxiety recently, stitch them together with hope and documentation, and call the result a governance framework. Most of the time, it is several pieces of a framework with significant gaps between them, none of which anyone owns.

The governance lifecycle is not a moment. It is a continuous loop. And every gap in that sequence is a place where something consequential can happen without governance touching it.

It made me think: if there are so many opinions about different parts of the governance lifecycle, what exactly is the full picture?

Here is the full sequence. All 40 steps, from supply chain provenance before the technology enters the building to decommissioning after it leaves.

Phase 0: Supply Chain and Provenance

This phase is almost entirely absent from current governance frameworks. It addresses the ingredients of the AI before any organizational decision is made.

Step 1. Model provenance. Where did the base model come from? Is it an open-weight model run locally or a closed API? What do the model cards say? What safety filters or architectural changes has the provider implemented, and how are those changes approved before they affect your deployment? If your base model provider changes their architecture, that change touches your governance configuration whether you know about it or not.

Step 2. Data sovereignty. Was the model trained on data that the organization has the right to use? What are the IP implications of the outputs? Data governance is not a parallel track. It is foundational to everything downstream. Poisoned or legally problematic training data is a governance failure that no runtime control can retroactively fix.

Step 3. Vendor and third-party risk. If you are consuming foundation models, APIs, or external tools, what are the SLAs for each? What governance portability clauses exist in the contracts? What happens to your audit trail if the vendor changes their terms or goes offline? Agentic systems chain external tools and amplify third-party risk at every step.

Phase 1: Predecision

This is the phase when the most consequential organizational decisions are made, often without a governance framework.

Step 4. Customer Value identification. This really should be step 1, but for the sake of existing infrastructure, I’ve placed it as close to first as possible. What are you actually trying to solve? Not what AI can do, and not what your customers might theoretically benefit from. What specific problem the organization has, grounded in what customers actually need. These are different questions,, and conflating them produces deployments that are technically impressive but operationally useless, built for a customer who was never consulted.

Step 5. Tool selection. Is AI the right solution? If so, which type. An LLM for tasks that require deterministic outputs is an architectural mismatch that creates governance complexity that didn’t need to exist. The selection of the right tool is itself a governance decision. And it does not have to be an LLM.

Step 6. Architecture decision. LLM, deterministic, hybrid, or traditional software. The architecture determines what governance is possible. You cannot retroactively apply runtime behavioral governance to a system that wasn’t designed for it.

Phase 2: Pre-Governance

Step 7. Asset discovery and inventory. Before anything else in this phase, the organization needs to know what already exists. Shadow agents are a real and growing problem. Identity registration assumes you know what you have. Most organizations don’t. A centralized AI asset inventory is the foundational control that makes all subsequent steps coherent.

Step 8. Use case definition. Specific scope, not general ambition. “AI-powered customer service” is not a use case. “Agent authorized to process returns under $200 without human review” is a use case. Governance requires specificity.

Step 9. Risk assessment. What could go wrong, at what consequence level, with what reversibility? This is where governance intensity per action type gets calibrated. Irreversible high-stakes actions require a different architecture than reversible low-stakes ones.

Step 10. Policy development. What rules apply to this system, written in terms that can be enforced rather than just documented? A policy that exists in a PDF and not in a runtime enforcement layer is a statement of intent, not a governance control.

Step 11. Regulatory alignment. What compliance requirements apply? EU AI Act Articles 9, 12, 13, 14 for high-risk systems. HIPAA for healthcare. SOX for financial reporting. The compliance requirements determine what evidence the governance infrastructure must produce.

Step 12. Resource allocation and priority. In a resource-constrained environment, which agents get priority access to compute and tokens? What are the SLAs per agent category? Governance should define the importance of agents before a crisis forces the question.

Step 13. Agent design. Behavioral contracts, scope definition, and authority boundaries. What this agent is authorized to do, what it is explicitly not authorized to do, what escalation paths exist, and what the suspension criteria are. The behavioral contract is the governance specification.

Step 14. Identity registration. Cryptographic birth certificate issued. Human owner assigned. Governance zone established. Archetype bound. This is the step that makes everything downstream attributable. Anonymous agents are ungovernable.

Step 15. Human capability building. Training and change management before deployment. Who reviews escalations, under what SLA, with what context? Agents change workflows more than traditional software does, and the humans in the governance chain need to be prepared before the system is live, not after the first incident.

Step 16. Testing and validation. Behavioral testing against the contract. Red team exercises. Edge case evaluation. Adversarial testing. This is not quality assurance for functionality. It is verification that the governance constraints hold under pressure.

Step 17. Deployment authorization. Formal sign-off by a named human with the authority to make that call. Not a merged pull request. A decision, on record, by an accountable person.

Phase 3: Runtime Governance

Step 18. Authentication. Is this the verified agent it claims to be? Certificate validation. Status check. An agent with a revoked or expired certificate does not proceed.

Step 19. Authorization. Is this agent permitted to act in this context? Scope check. Zone validation. Authority verification. This is the step most vendors claim as their primary value. It is one step of nine in the runtime phase.

Step 20. Pre-action evaluation. Multidimensional behavioral scoring before execution. The confidence score that determines whether the action proceeds, is modified, is escalated, or is denied. This is where the should question gets answered — not just can.

Step 21. Budgetary governance. Does this action make economic sense? An agent that wants to run a $50 compute task to solve a $5 problem should be interrupted. Cost-to-benefit evaluation is a governance dimension that most frameworks omit entirely and that production deployments encounter immediately.

Step 22. Cross-border jurisdictional check. If an agent is interacting with a user in the EU but the compute is in the US, is the data transfer governed? Jurisdictional compliance is a runtime check because the agent’s operational context changes with every request.

Step 23. Trust and execution monitoring. Continuous observation during the action. Not just before and after, but during. The governance layer operates in parallel with execution, maintaining real-time visibility into what is happening and the agent’s ongoing trust calculation.

Step 24. Interrupt authority. The mechanical capability to halt mid-execution when a threshold is crossed. Not an alert. Not a notification. An actual stop, before irreversible consequences occur. This is the step that makes all other runtime governance real rather than advisory.

Step 25. Escalation routing. When the evaluation produces an escalation verdict, the action routes to human review with the context needed to make a meaningful decision. Not an approval queue with a name attached. A genuine oversight function.

Step 26. Multi-agent handoff governance. When one agent delegates to another, the governance layer evaluates the handoff itself. Accountability does not transfer with the delegation. The compound authority of the chain is assessed, not just the individual agent’s scope.

Phase 4: Post-Governance

Step 27. Output evaluation. Was what was produced appropriate? Not just whether the action was permitted, but whether the output aligns with the behavioral contract and organizational intent.

Step 28. Audit trail verification. The hash-chained, tamper-evident record of what happened. Every action is attributable to a specific verified identity. Every governance evaluation is linked to the action it governs. Every verdict is sealed and cryptographically verifiable.

Step 29. Trust calibration. Trust scores updated based on outcomes. Violations decrease trust asymmetrically. One violation costs five successful actions to recover from. The asymmetry reflects real-world risk.

Step 30. Behavioral drift detection. Has the agent’s behavioral pattern shifted from its established baseline? Action drift, target drift, temporal drift, outcome drift, and semantic drift are each tracked independently.

Step 31. Human reviewer drift monitoring. Are the humans reviewing escalations maintaining oversight quality over time? Approval rate trends. Review time. Consistency across similar cases. The oversight function monitored, not just the agents being overseen.

Step 32. Incident review. Full reconstruction of what happened, when, under which governance configuration, authorized by whom, and where the governance gap was. Not blame assignment. Root cause.

Step 33. Policy feedback loop. Do the rules need updating based on what the system produced? Governance that cannot learn from outcomes cannot improve.

Step 34. Model refresh and continuous training governance. Steps 1 and 2 cover initial provenance, but many organizations retrain models, swap embeddings, or refresh RAG sources on a quarterly cadence. Each update is a governance event. New training data changes behavioral baselines. New embeddings change what the agent considers semantically similar. A model swap can invalidate the behavioral fingerprint the governance system has built over months. Every refresh should trigger a provenance review, a baseline reset, and a re-validation against the behavioral contract before the updated system returns to production.

Step 35. Compliance reporting. Produce the evidence that regulators require. Verifiable records that meet applicable framework evidence standards. The existing audit trail is not enough. It must be presentable.

Step 36. Intelligence layer. Across all evaluations, what patterns emerge that improve future governance decisions? The system is improving its governance based on what it has observed.

Phase 5: Decommissioning

Every system eventually ends. This phase is missing from most governance frameworks, and it is where zombie agents, data exposure, and unresolved accountability chains accumulate.

Step 37. Agent sunset protocol. When an agent is retired, its cryptographic identity and credentials are formally revoked. The certificate joins the revocation record. The agent cannot reactivate. This prevents unauthorized access from persisting after the business reason for the agent’s existence ceases.

Step 38. Data erasure and right-to-be-forgotten compliance. If the agent was personalized, accessed user data, or accumulated memory, that data must be purged in compliance with GDPR, CCPA, and applicable frameworks. Decommissioning is not complete until the data trail is closed.

Step 39. Final audit and legal archive. The full governance record transitions from active audit trail to long-term legal archive. The chain of custody for the record itself is documented. The archive is accessible for the retention period required by applicable regulation.

Step 40. Meta-governance review. Who audited the evaluation engine? Who owns drift in the scoring model or the policy feedback loop? This is the who-watches-the-watchers question that regulators are beginning to ask. Before the agent is fully decommissioned, the governance layer that governed it gets its own review.

The Point

Yes, it is a lot. It is supposed to be a lot. AI governance is genuinely complicated, and anyone telling you otherwise is selling something simpler than what the problem actually requires.

Someone will read this and say we do something not on the list. Someone else will suggest additions.

Someone will argue that a step here shouldn’t be, that two steps should be one, or that the phases are wrong. That’s fine. That’s what happens when you put a framework on the internet.

40 is either right or wrong. Reasonable people can debate the edges.

What is harder to debate is that most organizations are not looking at governance at anything close to this scope. They are covering a handful of steps, calling it done, and moving on. That is not a criticism. It is a reality. And if this list does one thing, it is to make visible how much of the lifecycle exists outside whatever any single team, tool, or framework currently touches.

The gaps are real. And they will remain there until someone decides they are worth closing.

If you find this content valuable, please share it with your network.

Follow me for daily insights.

Book me to speak at your next event.

Chris Hood is an AI strategist and author of the #1 Amazon Best Seller Infailible and Customer Transformation, and has been recognized as one of the Top 30 Global Gurus for Customer Experience. His latest book, Unmapping Customer Journeys, will be published in 2026.