Degenerative AI is Real

I bring up the topic of degenerative AI in almost every talk I give.

And almost every time, I get the same reaction. A pause. A slight tilt of the head. A room full of sharp, experienced people looking at me as if I just said something that cannot possibly be true.

The idea that an AI system can get worse over time, that the very act of using it can quietly corrupt its reliability, runs against everything the industry has been selling. We have been told that more data means better models. That feedback improves performance. That AI systems learn, adapt, and grow more capable with use.

It’s increasingly concerning, based on the most recent numbers showing that more than half of the content on the internet was generated by AI.

All of that can be true. And all of that can mask a failure mode that most organizations are completely unprepared for.

What Degenerative AI Actually Means

Degenerative AI occurs when the feedback loops feeding a model begin to work against it.

It starts subtly. A model produces outputs. Those outputs influence behavior. That behavior generates new data. That new data gets fed back into the system. And if nobody is watching closely, the model begins training on the consequences of its own mistakes.

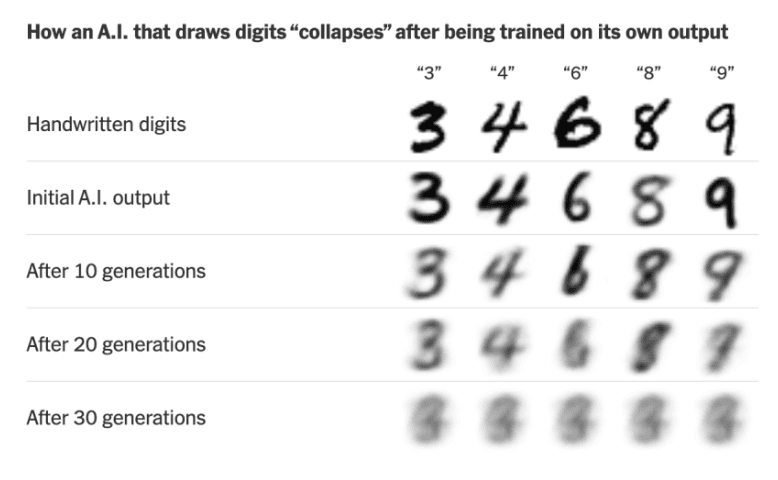

The technical term for this is model collapse. Feed a generative model enough AI-produced content, and it begins to lose the richness and diversity of the original human-generated data it was trained on. The outputs narrow. Edge cases disappear. The model becomes increasingly confident about a progressively narrower view of the world.

But model collapse is only one expression of a broader degenerative pattern. The same dynamic occurs wherever a model’s outputs shape the inputs it later learns from. A recommendation engine that nudges users toward certain content and then trains on their engagement with that content is shaping its own future. A hiring tool that filters candidates based on historical patterns and receives feedback only on the candidates it allows through reinforces the very biases it was supposed to eliminate. A customer service model that resolves certain queries automatically, then trains on the queries that remained, never seeing the ones it handled poorly, because those users simply left.

In each case, the model is learning. The problem is what it is learning from.

The Slow Erosion Nobody Notices

What makes degenerative AI particularly dangerous is that it rarely announces itself.

A system that fails catastrophically on day one gets fixed or replaced. A system that degrades by 2% per quarter over 2 years becomes the background assumption. People adapt to its limitations without realizing that the limitations are growing. Workarounds accumulate. Teams build processes around the model’s blind spots without ever naming them as evidence of a deeper problem.

By the time someone asks why the model performs so much worse than it did eighteen months ago, the causal chain is nearly impossible to reconstruct. The training data has been updated dozens of times. The feedback signals have been adjusted. The deployment environment has changed. Nobody can point to the moment it started getting worse because there was no single moment. It eroded.

This is the compounding nature of degenerative AI. Small errors generate slightly worse data. Slightly worse data produces slightly worse outputs. Slightly worse outputs influence slightly worse behavior. Slightly worse behavior generates slightly worse data. The cycle does not accelerate dramatically. It just continues, quietly, until the gap between what the model was and what it has become is too large to ignore.

The erosion has long-term consequences, including complete model collapse or a flattening of the human language more broadly.

Why the Industry Blank Stares

The skepticism I encounter is understandable for a few reasons.

The AI industry is structured around progress narratives. More compute, more data, more capability. The story moves in one direction: forward and up. Degenerative AI is a story that moves sideways and downward, set against the backdrop of product launches, benchmark improvements, and capability announcements that dominate the conversation.

There is also a measurement problem. Improvement is easy to see. A model that scores higher on a benchmark, resolves more tickets, or converts more leads is visibly better. Degradation is harder to see, especially when it is gradual, especially when the baseline has drifted along with the model, and especially when the feedback systems are themselves contaminated.

Organizations that track model performance at all tend to track it against recent baselines rather than original deployment conditions. If the baseline moves with the model, the degradation becomes invisible. The model is always performing roughly as well as it performed last month, even if it is performing dramatically worse than it performed two years ago.

The Model You Deployed Is Not the Model You Have

This is the line that tends to land hardest in a room.

Every organization that has been running an AI system for more than a year is operating a system that has changed since deployment. Some of that change is intentional. Updates, retraining, fine-tuning. But some of it is the accumulated weight of feedback loops, data drift, and the slow pressure of a model learning from its own influence on the world.

The model you deployed was evaluated, tested, and approved. The model you have today has been shaped by everything that happened after that approval. Whether it is better or worse depends on what it has been learning from, and most organizations cannot answer that question with confidence.

Degenerative AI is real. The blank stares tell me the industry is not ready for it.

The question worth asking before the next model review is simple: Are we measuring whether this system is actually getting better, or are we just assuming it is?

If you find this content valuable, please share it with your network.

Follow me for daily insights.

Book me to speak at your next event.

Chris Hood is an AI strategist and author of the #1 Amazon Best Seller Infailible and Customer Transformation, and has been recognized as one of the Top 30 Global Gurus for Customer Experience. His latest book, Unmapping Customer Journeys, will be published in 2026.