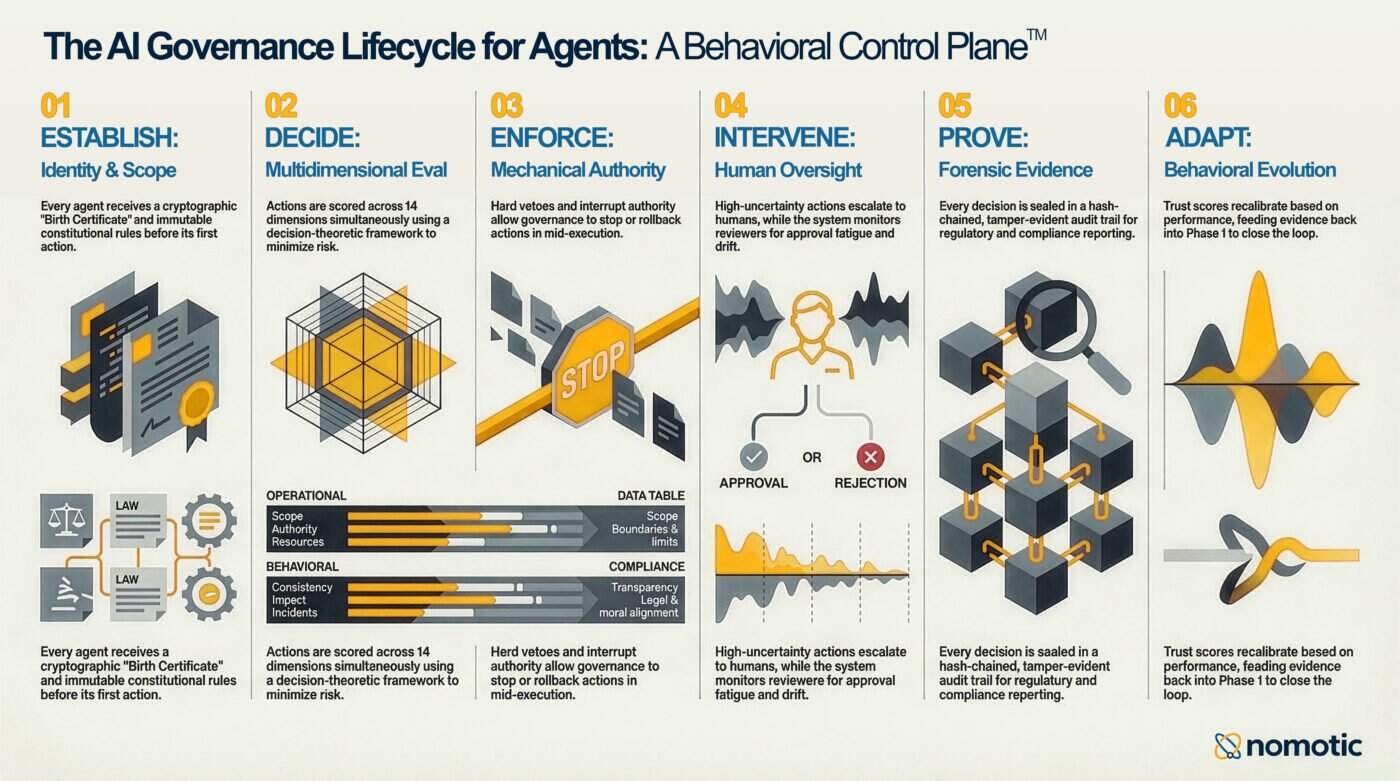

The Six Phases of AI Governance for Agents

Most governance tools cover one phase. The lifecycle has six.

I keep seeing various diagrams on LinkedIn. Someone maps out eight or ten “layers” of AI governance, assigns a different startup to each layer, and calls it an architecture.

The individual capabilities matter. But because layers imply independence. Stack them up, pick the ones you need, skip the ones you don’t. That’s how you think about middleware. It’s not how governance works.

Governance is a lifecycle. Every phase feeds the next. The output of the last phase tightens the first. Skip a phase, and the loop breaks. Bolt together six independent tools from six different vendors and you get six tools with no shared identity, no unified trust model, and no integrated audit trail. You get the illusion of coverage, but the reality is gaps at every seam.

There are six phases in AI Governance. And they form a closed loop.

Phase 1: Establish

Who is this agent? What authority does it hold? What are the rules that can never be overridden?

This is where governance begins. Before the agent takes a single action, it needs a cryptographic identity bound to a human owner. It needs a behavioral contract that declares what it’s expected to do, versioned and sealed. It needs constitutional rules that set the absolute floor beneath all other governance. Not configurable rules. Immutable ones.

Most teams start here and stop here. They configure permissions, write a policy document, and move on. But an establishment without the other five phases is a one-time setup in a world that is constantly changing. The agent drifts. The context shifts. The policy document gathers dust.

Phase 2: Decide

Given this action, this context, and everything we know about this agent’s behavioral history, what is the optimal governance decision?

This is the phase almost everyone gets wrong. They reduce it to rules. Allow or deny. Pass or fail. A single score against a single threshold.

Governance is a decision problem. Blocking a safe action has a cost. Allowing a dangerous one has a cost. The right framework minimizes the expected total cost given available information. That means scoring across multiple dimensions simultaneously, not sequentially. It means recognizing that the same action by the same agent can require different governance responses depending on when it happens, what else is happening, and where the agent’s trust trajectory currently sits.

It also means knowing when to ask for help. If the expected value of human input exceeds the cost of the delay, escalate. If it doesn’t, decide. Most systems escalate based on static thresholds. The decision-theoretic approach escalates based on information value.

Phase 3: Enforce

Make the decision real. Not advisory. Not a recommendation. Mechanically enforced with the authority to stop an action mid-stream.

There’s a commitment boundary between deciding what an agent should do and ensuring it actually happens. Most governance tools quietly become monitoring tools at this boundary. They issue a verdict. Then they hope the execution layer respects it.

Enforcement means the tool doesn’t run unless governance approves. It means governance maintains ultimate authority during execution, not just before it. It means cryptographic proof of authorization travels with the action as a signed, single-use, time-bounded governance seal. And for multi-step workflows, it means that every seal is hash-linked to the previous one, creating verifiable proof of continuous governance from the first step to the last.

Phase 4: Intervene

When governance can’t decide on its own, humans exercise genuine authority.

“Human in the loop” has become a compliance checkbox. The human exists somewhere in the workflow. Whether they actually exercise judgment is another question entirely.

Real intervention means halting execution and giving the human everything they need to make a real decision: the proposed action, the dimension scores, the reasoning, the trust trajectory, and the specific reason governance escalated. It means monitoring whether the human is actually making a decision or just approving. If approval rates climb too high, if reversals drop to zero, if consecutive agreements stack up, something is wrong on the human side.

The hardest part of human oversight isn’t getting humans into the loop. It’s verifying that they’re actually overseeing.

Phase 5: Prove

Produce evidence that governance happened. Not logs. Cryptographic proof.

Auditors don’t want to hear “we governed it.” They want to see the record. They want to verify it hasn’t been tampered with. They want to ask “what would have happened under different rules?” and get an answer.

A hash-chained audit trail where every record is cryptographically linked to the previous one. Governance seals that prove authorization at the moment of decision. Counterfactual replay that runs historical actions against alternative configurations. Compliance scorecards that map governance data directly to framework requirements. This is the phase that turns governance from an internal practice into externally verifiable evidence.

Phase 6: Adapt

The system learns. Trust evolves. Boundaries tighten or relax based on evidence. The loop closes.

An agent earns trust through observed behavioral consistency. It loses trust faster than it earns it. That asymmetry is intentional. Behavioral fingerprints define what “normal” looks like and detect when patterns shift. Drift detection catches degradation across five distributions for both agents and the humans reviewing them.

Everything Phase 6 learns feeds back into Phase 1. Trust changes affect future evaluations. Drift detection tightens boundaries. Fleet-level patterns inform contract updates. The lifecycle is a loop, not a stack.

The Loop, Not the Stack

Establish. Decide. Enforce. Intervene. Prove. Adapt. Back to Establish.

This is what we built at Nomotic. Not a layer in someone else’s stack. A complete governance lifecycle as a single integrated runtime. One identity model. One trust system. One audit trail. Zero external dependencies. Sub-millisecond evaluation.

The market is full of tools that cover one phase well.

Nobody else closes the loop.

The Behavioral Control Plane does.

If you find this content valuable, please share it with your network.

Follow me for daily insights.

Book me to speak at your next event.

Chris Hood is an AI strategist and author of the #1 Amazon Best Seller Infailible and Customer Transformation, and has been recognized as one of the Top 30 Global Gurus for Customer Experience. His latest book, Unmapping Customer Journeys, will be published in 2026.