The Agent Maturity Model: Six Levels of Real Capability (Not Hype)

There are levels of AI capability. Levels of automation. Levels of agent complexity. Most of them were written to describe implementation patterns such as tool-calling loops, context windows, and chaining strategies rather than to answer the question that actually matters to the people deploying these systems.

What can this agent deliver in the real world without constant human intervention?

That is a different question. And it requires a different framework. Not one organized around how the agent is built, but one organized around what the agent can actually do, how reliably it does so, and how much human scaffolding it still requires beneath the performance.

One more thing worth stating up front, before the levels: none of this is autonomous. Not Level 4. Not Level 5. Not even Level 6 in the full philosophical sense. Every system, at every level, operates within goals, constraints, and infrastructure designed by humans. The framework is about capability depth and governance complexity. The accountability chain runs through humans at every level.

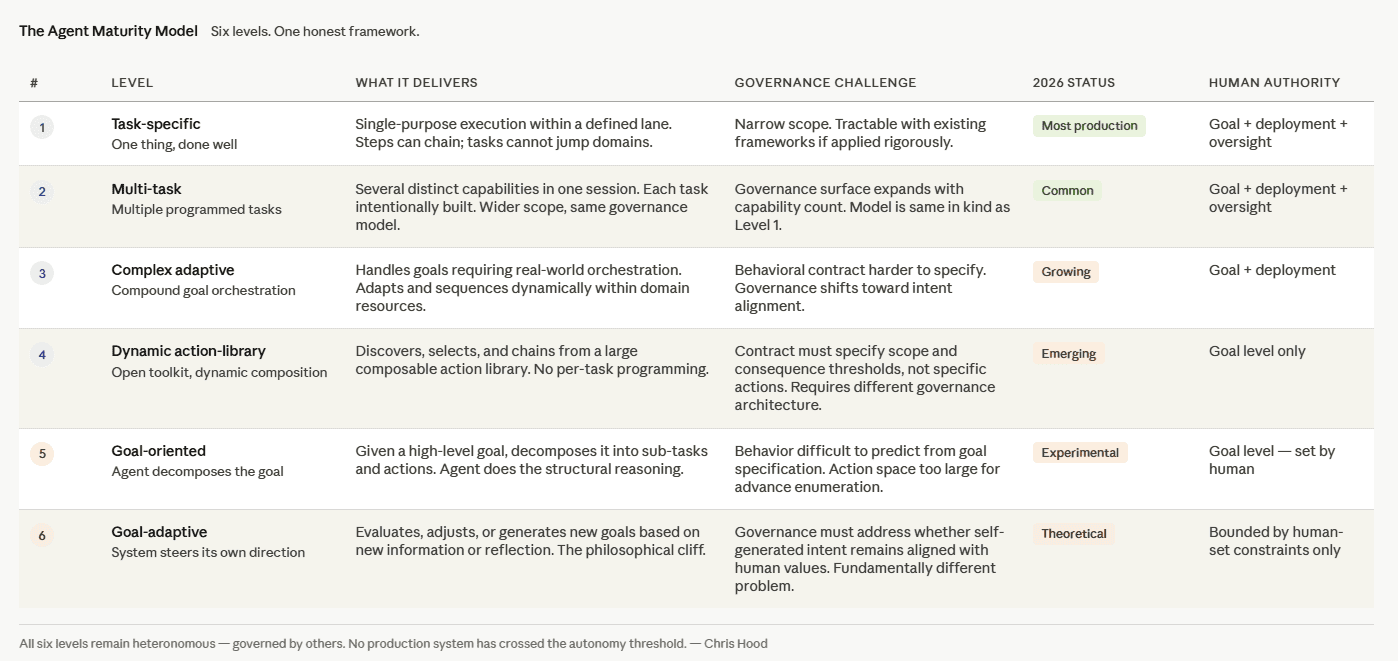

Here is that framework. Six levels. Grounded in what exists in production today and what is being built toward.

Level 1: Task-Specific Agents

This is where almost everything in production lives right now.

A Level 1 agent does one thing. It does that thing well, sometimes extraordinarily well. It can chain steps within the task, adapt to variations, and handle a meaningful range of inputs. What it cannot do is jump to an unrelated task. If scheduling meetings was not explicitly built into its logic, it does not schedule meetings. The capability boundary is defined by what was intentionally wired in.

Customer service bots. Document summarizers. Invoice processors. Scheduling assistants. These are Level 1 agents. Most of what organizations are deploying today, regardless of the marketing language used to describe them, is Level 1. Single-purpose, well-scoped, operating reliably within a defined lane.

The governance question at Level 1 is relatively tractable. The behavioral contract is narrow. The audit trail is bounded. The scope violations are detectable because the scope is specific. Level 1 agents can be governed using well-designed, well-applied existing frameworks.

Level 2: Multi-Task Agents

A Level 2 agent handles several distinct, pre-programmed tasks in the same session. Pay a bill, write a report, schedule a meeting, and handle a customer inquiry. Each capability was intentionally built. Each has its own logic path. The agent is executing against a wider set of defined capabilities within the same session context.

A practical example: a personal assistant agent that handles email triage, calendar management, and expense reporting in a single session. Three distinct capability domains. Each was wired in deliberately. The session feels broad. The underlying architecture is a collection of Level 1 patterns working together.

The distinction from Level 1 is breadth of scope, not depth of reasoning. The governance surface area expands proportionally with the number of capabilities, but the governance model is the same in kind.

Most production agents that marketing describes as sophisticated assistants or multi-modal agents are Level 2. The capabilities feel broader than a single task. The underlying architecture is a collection of Level 1 patterns.

Level 3: Complex Adaptive Agents

Level 3 is where the behavior starts to feel qualitatively different.

A complex adaptive agent handles compound, real-world goals that require orchestration. Planning a business trip is the clearest example. The goal is singular. The path to it requires dynamically booking flights, hotels, ground transportation, restaurants, and meetings, sequencing them coherently, adapting when individual bookings are unavailable, and producing an outcome that serves the original intent.

The agent has not been programmed with the specific combination of steps for this trip. It has been given the underlying resources and logic to adapt, sequence, and improvise within the relevant domain. The behavior feels fluid because the adaptation is genuine, not scripted. But it is still guided by desired outcomes and programmed constraints. Someone defined what “plan a business trip” means and what resources the agent has access to.

This is also where persistent project context starts to matter. A file like AGENTS.md, an always-loaded markdown document containing project architecture, conventions, and setup context, means the agent can orchestrate across related steps without the human repeating the same background information in every session. Persistent context is what separates an agent that feels fluid from one that starts from zero on every request.

Level 3 is where governance frameworks start to encounter genuine complexity. The behavioral contract is harder to specify in advance. The action space is larger. The governance question shifts from “did this agent do what it was programmed to do” toward “did this agent do what we intended, given a goal we defined broadly.”

Level 4: Dynamic Action-Library Agents

This is a significant jump.

A Level 4 agent is no longer programmed per task. Instead, it has access to a large, composable library of atomic actions and tools, potentially enormous in scope. It discovers, selects, and chains whatever it needs in the moment. The capability is not a set of specific actions that are banned or approved. It is an open toolkit that the agent dynamically explores to achieve the goal it has been given.

At Level 4, the agent is a masterful tool-user. At Level 5, they become a capable project manager. The distinction is who does the structural decomposition.

This is where intent-based API design (AGIS / Agentic API), tool routers, MCP connectors, SKILL.md files, and persistent skill libraries become genuinely important rather than theoretical. SKILL.md packages reusable procedural knowledge, step-by-step playbooks, templates, and executable scripts that the agent loads only when relevant. AGENTS.md supplies always-loaded project rules. MCP connectors act as a universal bridge, enabling the agent to discover and interact with external data sources and systems without custom integrations for each one. Together, they turn a static toolkit into a dynamic, explorable action library.

A concrete example: an advanced coding agent that encounters an unfamiliar library, reads the documentation, writes a wrapper, tests it, and integrates it, all within a single task, without being explicitly told to do any of those steps. The agent reasoned about what was needed, found the tools, and composed them.

Level 4 agents sound like something out of science fiction. They are real at a small scale in specific domains. They are still heteronomous. The goal was set by a human. The tool library was curated by a team. The boundaries are real even when they feel invisible.

Governance at Level 4 requires a different architecture than Levels 1 through 3. The behavioral contract cannot enumerate specific actions. It has to specify scope, consequence thresholds, and reversibility constraints that apply to whatever the agent discovers and composes. This is the governance problem that most runtime AI governance products were not designed for.

Level 5: Goal-Oriented Agents

Given a high-level goal, a Level 5 agent decomposes it into whatever tasks and actions the goal requires, draws from its available libraries to pursue it, and operates across a dramatically expanded range of domains and depths.

The difference from Level 4 is the degree to which the agent is doing the structural reasoning rather than the human. At Level 4, a human typically defines the task decomposition, and the agent finds the tools. At Level 5, the agent is doing meaningful decomposition of the goal itself, determining the sub-goals, sequencing the work, and deciding how to measure progress.

Emerging protocols like the Agent Transfer Protocol (AGTP) point to the infrastructure this level eventually requires. Where MCP handles tool and context access, AGTP is designed as a dedicated agent-native layer for intent-based communication, delegation, and trust between agents. Goal decomposition at Level 5 increasingly involves multiple agents collaborating, and the governance of those collaborations requires wire-level identity and authority that current infrastructure does not natively provide.

Level 5 is common in research environments and fragile in production. The reliability gap between “this worked in the demo” and “this works consistently in the wild with real data, real edge cases, and real consequences” is significant. The governance challenge at Level 5 is that the agent’s behavior is genuinely difficult to predict solely from the goal specification. The space of possible paths is too large for advanced enumeration.

Level 6: Goal-Adaptive Agents

This is the philosophical cliff.

A Level 6 agent evaluates, adjusts, alters, or generates new goals based on new information, reflection, or changing circumstances. Everything before Level 6 is a sophisticated execution of human-assigned intent. At Level 6, the system begins steering its own direction.

Level 6 is experimental, heavily sandboxed, and rightly so. The moment a system can meaningfully alter its own goals, the governance question is no longer “did this agent do what we intended” but “can we trust that this agent’s self-generated intent remains aligned with what we value.” That is a different and harder question, and the field has not resolved it.

It will require baked-in constitutional layers, trust alignment, and verifiable safety proofs. The governance of this level will not only depend on AGTP but also on architecturally distinct governance systems that currently do not exist.

Important clarification: Level 6 is still not autonomous either. Even a goal-adaptive agent operates inside infrastructure designed by humans. The adaptation occurs within the bounds humans established. But it is close enough to the autonomy threshold that treating it as equivalent to Levels 1 through 5 would be a serious governance error.

The 2026 Reality Check

Most production agents are Level 1 or Level 2. This is not a criticism. Level 1 and 2 agents are genuinely valuable and represent significant engineering achievement. The governance frameworks that exist today are largely adequate for them if applied rigorously.

Standards like AGENTS.md, SKILL.md, and MCP are already accelerating movement from Level 2 into Levels 3 in coding, research, and operational domains.

A small number of advanced systems are reaching Level 4 in specific domains, particularly in coding and research. Emerging protocols such as AGTP will help strengthen Level 4 and advance to Level 5, where goal decomposition is implemented in labs and is mostly experimental.

Level 6 is still primarily theoretical. It is being explored carefully, under constraints that preserve human oversight at the goal level. It is not in general production deployment and should not be.

The Right Question

This framework is useful because it forces a specific question. Not “when do agents become autonomous?” That question is unanswerable with current technology and largely asks about a future that may be decades away.

The right question is: how far can we push capability while keeping meaningful human authority at the goal level?

Levels 1 through 5 are all consistent with human authority at the goal level. A human sets the goal. The agent executes with increasing sophistication and decreasing granularity of human instruction. Governance at each level is different in complexity but consistent in structure: someone sets the goal, someone deploys the agent, someone is accountable for the outcomes.

Level 6 is where that structure starts to bend. The agent’s goal adaptation is bounded by human-set constraints, but the goal itself is no longer a direct human decision. The governance framework has to adapt accordingly.

The organizations doing this well are the ones that are honest about where their agents actually are on this framework, not where the marketing says they are. Governing a Level 2 agent as though it is Level 5 wastes resources. Governing a Level 4 agent as though it is Level 2 creates the gaps that become incidents.

Know the level. Design the governance accordingly.

If you find this content valuable, please share it with your network.

Follow me for daily insights.

Book me to speak at your next event.

Start managing your agents for free.

Chris Hood is an AI strategist and author of the #1 Amazon Best Seller Infailible and Customer Transformation, and has been recognized as one of the Top 30 Global Gurus for Customer Experience. His latest book, Unmapping Customer Journeys, will be published in 2026.